Embarking on the quest to leverage the extensive data of Wikipedia, you’ll find that a social media scraping API serves as the gateway to not only extracting information smoothly but also ethically.

This guide will illuminate the intricacies of Wikipedia’s architecture, the advantages of deploying an API for data collection, how to choose the perfect one for your needs, and the proper way to integrate it into your workflow. Moreover, we won’t shy away from delving into the legal and ethical aspects of data scraping. Let’s plunge into the depths of Wikipedia’s wealth of knowledge together, wielding the cutting-edge tools of social media scraping APIs.

Key Takeaways

- Understanding the structure of Wikipedia articles is crucial for efficient data extraction.

- Utilizing a scraping API saves time, reduces errors, and allows for automatic updates.

- When choosing a Wikipedia API, consider factors like reliability, speed, and ease of use.

- Implementing the selected API effectively enables access to real-time, accurate information from Wikipedia.

Understanding Wikipedia’s Structure

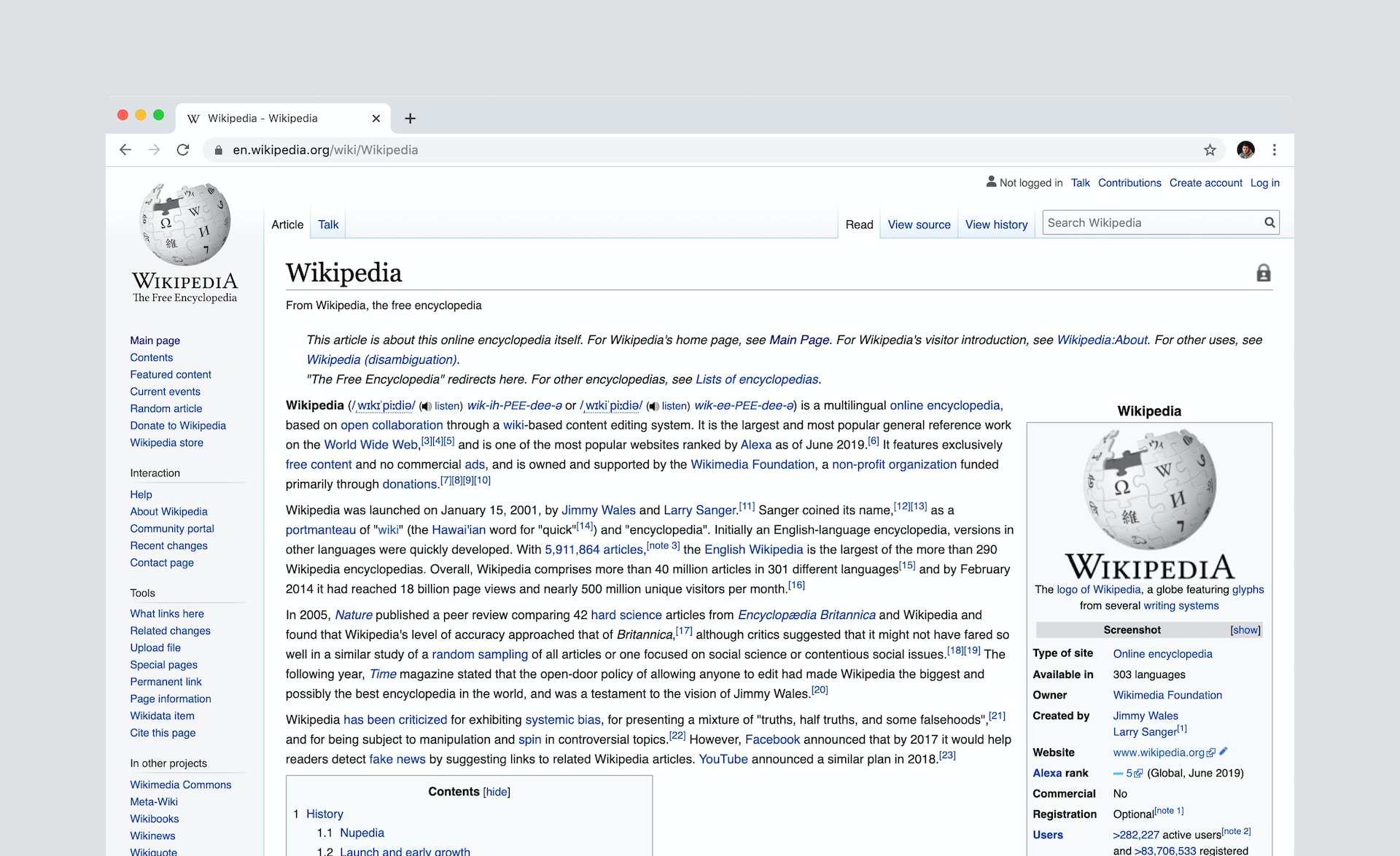

Before you dive into scraping Wikipedia, it’s crucial to grasp how its content is organized and structured.

Articles are categorized by topics, which you’ll navigate through easily. Each page has a consistent layout, with infoboxes, headings, and references.

Understanding this setup ensures you’ll extract the data you need efficiently. So, pay attention to these elements—they’re your roadmap for successful scraping.

Benefits of Using a Scraping API

Using a scraping API, you’ll streamline data extraction from Wikipedia, enhancing efficiency and accuracy.

- Save Time: No more endless hours manually sifting through pages.

- Stay Updated: Automatically receive the latest data, keeping you ahead.

- Minimize Errors: Reduce the risk of human error significantly.

- Easy Integration: Seamlessly incorporate data into your projects, feeling like a pro.

Choosing the Right Wikipedia API

To choose the best Wikipedia API for your needs, you’ll want to consider factors such as reliability, speed, and ease of use. Here’s a quick comparison to guide you:

| Factor | Why It Matters |

| Reliability | Ensures consistent results |

| Speed | Affects user experience |

| Ease of Use | Simplifies integration |

| Documentation | Helps with troubleshooting |

Implementing the API for Data Retrieval

Once you’ve selected a Wikipedia API that meets your criteria for reliability, speed, and ease of use, you’ll need to implement it effectively to retrieve data.

- Feel the thrill of tapping into the vast knowledge pool of Wikipedia.

- Imagine creating projects powered by real-time, accurate information.

- Anticipate the ease of integrating rich content with a simple API call.

- Envision the growth in your understanding as you access this data trove.

Handling Legal and Ethical Considerations

You’ll need to consider several legal and ethical factors before using a Wikipedia scraping API to ensure compliance and respect for intellectual property rights.

Always check Wikipedia’s terms of use and copyright information.

Don’t misuse the data or infringe on copyrights.

It’s crucial to use the API responsibly, respecting the community’s guidelines and the effort behind the freely available knowledge on the platform.

FAQ:

What is a Scraping API for Wikipedia?

A Scraping API for Wikipedia is a tool or service that enables developers to extract or “scrape” data from Wikipedia’s website for use in their applications or analysis.

How does the Scraping API for Wikipedia work?

The API works by sending HTTP requests to Wikipedia’s server and retrieving structured data in response. This data can then be parsed and used as desired.

Is the Scraping API for Wikipedia free?

This largely depends on the specific API you are using. Some APIs might have free tiers with usage limitations, while others might require a subscription or payment.

Why would I need to use a Scraping API for Wikipedia?

It can be used for a variety of purposes, such as creating a data set for research, integrating Wikipedia data into other apps or websites, or performing large-scale analysis of Wikipedia content.

Is using a Scraping API for Wikipedia legal?

Yes, but with some caveats. Wikipedia allows for data scraping, but it must be done responsibly to avoid placing too much load on their servers. Also, any data derived from Wikipedia must be appropriately attributed.